Halo

Exposing and countering consent theater — a privacy tool built on original research into the gap between stated policy and actual practice.

The Problem

Social media platforms are built on a fundamental asymmetry of information. The platforms know everything about their users. Users know almost nothing about what is being collected, who it is shared with, or how it shapes what they see. Consent banners are designed to be dismissed rather than read. Privacy settings are split across multiple menus and default to maximum data sharing. Age verification systems collect sensitive government documents with no transparency about retention. And when users try to leave, the delete account option is buried so deep it functions as a deliberate barrier.

Halo is a fictional social media app designed to show what privacy first design looks like when genuine user consent replaces compliance theater.

Research

Research for Halo drew on primary investigation and extensive secondary sources. Primary research included hands on network traffic analysis using Proxyman, which revealed third party tracking infrastructure operating across major platforms invisibly to users. This fieldwork directly informed two original frameworks I developed: consent theater, which describes the gap between the appearance of user consent and genuine informed agreement, and accountability laundering, which describes how responsibility for data misuse is deliberately distributed across enough parties that no single actor can be held accountable. Both frameworks have since been validated by regulators using similar language in enforcement actions.

Secondary research included:

- Four Reddit threads from r/privacy on age verification, forced data sharing, breach fatigue, and a Google patent filed in March 2026

- Academic papers on dark patterns taxonomy, consent design, and manipulative interface design

- News articles covering Meta and TikTok DSA violations, TikTok's 530 million euro GDPR fine, Meta's 200 million euro DMA fine, and SHEIN's 150 million euro CNIL fine

- Regulatory frameworks including GDPR, DSA, DMA, CCPA and CPRA, and FTC enforcement actions

- Competitive analysis of TikTok, Instagram, and Facebook, all of which share the same dark pattern failures

Meta Privacy Center requiring navigation through multiple menus and sub sections before reaching any actionable control.

Key Insights

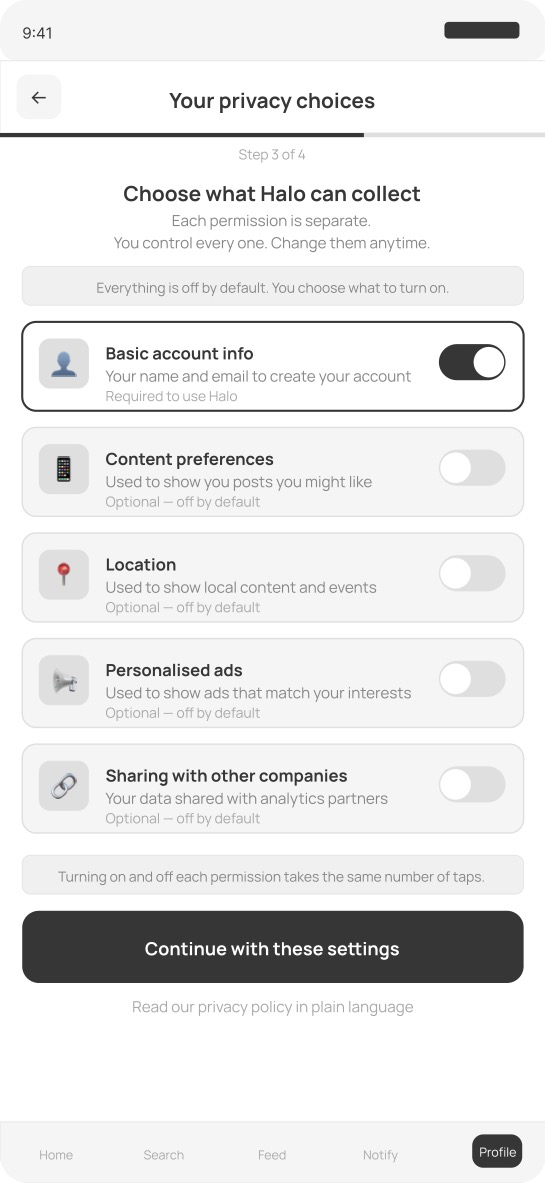

- Consent is designed to be bypassed. Accept All is visually dominant, Reject All requires more taps, and pre checked boxes assume agreement rather than obtaining it

- Third party tracking is invisible by design. Users have no way to see what is being collected or who receives it without technical tools most people do not have

- Age verification systems solve a narrow regulatory problem by creating a much larger privacy problem. Government ID documents collected at scale become a surveillance infrastructure

- Accountability is designed to be diffuse. When data is misused, responsibility is spread across so many parties that no single actor can be held responsible

- Privacy settings placed three menus deep are not accessible. They are theater

- The gap between what companies say about privacy and what they actually do is not a communication problem. It is a design decision

Design Process

I mapped the current state experience of a general social media user across eight journey stages, from downloading the app through attempting to delete their account. The journey map revealed a consistent pattern across every stage: the platform is designed to make data collection effortless and invisible while making privacy protection effortful and obscure. The most important design decision in Halo was to reverse that asymmetry entirely. Protection is the default. Collection requires active opt in. Every stage of the journey map had a direct corresponding design response in the solution.

Solution

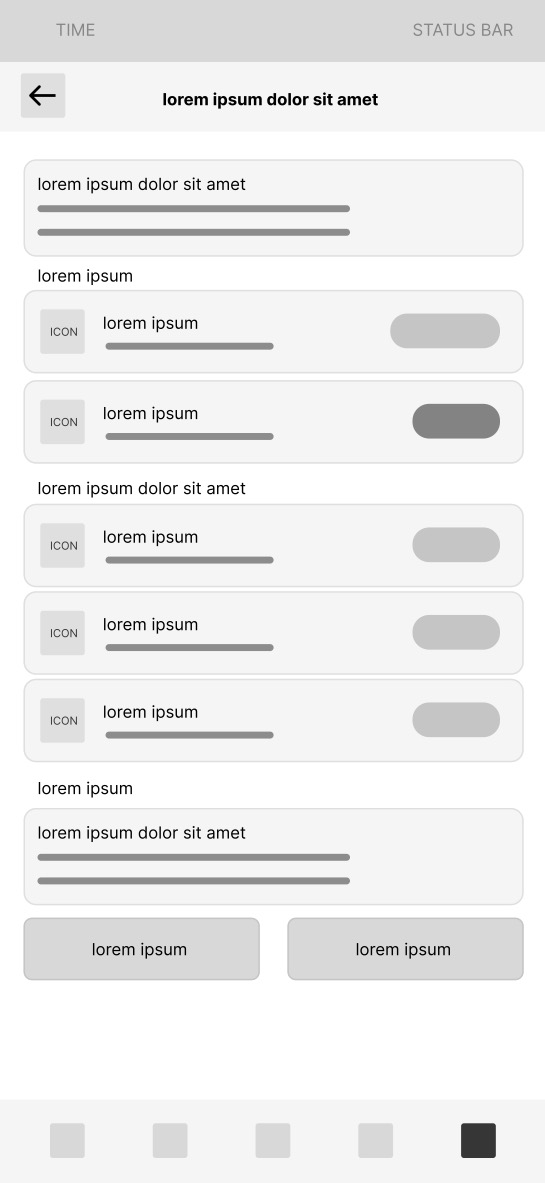

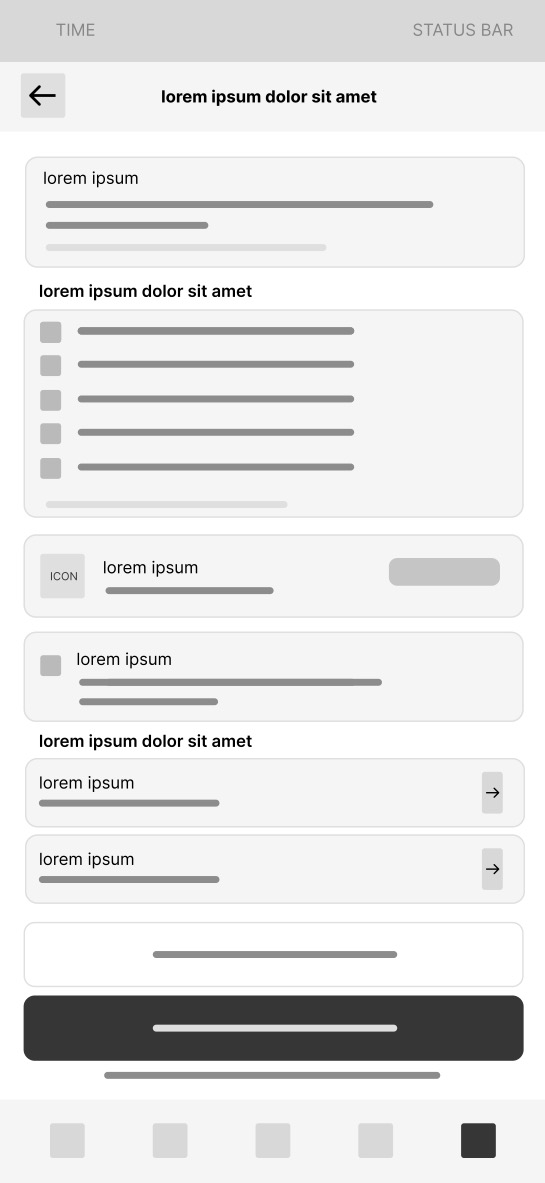

Screen 1 — Onboarding consent flow All permissions are off by default. Each permission is a separate card with a plain language description. Basic account data is the only required permission. Accepting and declining each permission takes the same number of taps. There are no pre checked boxes.

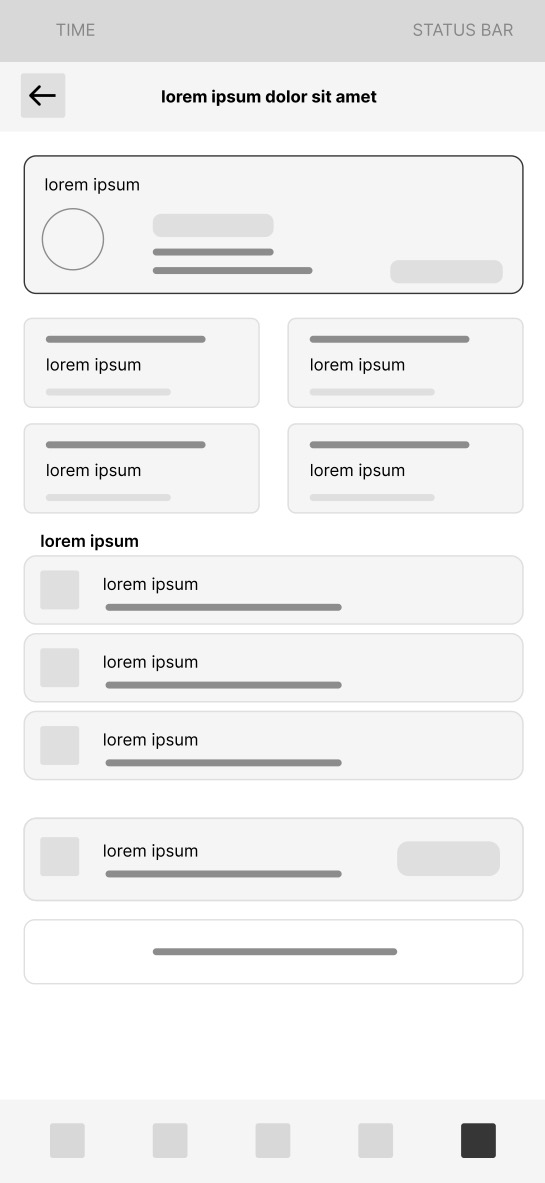

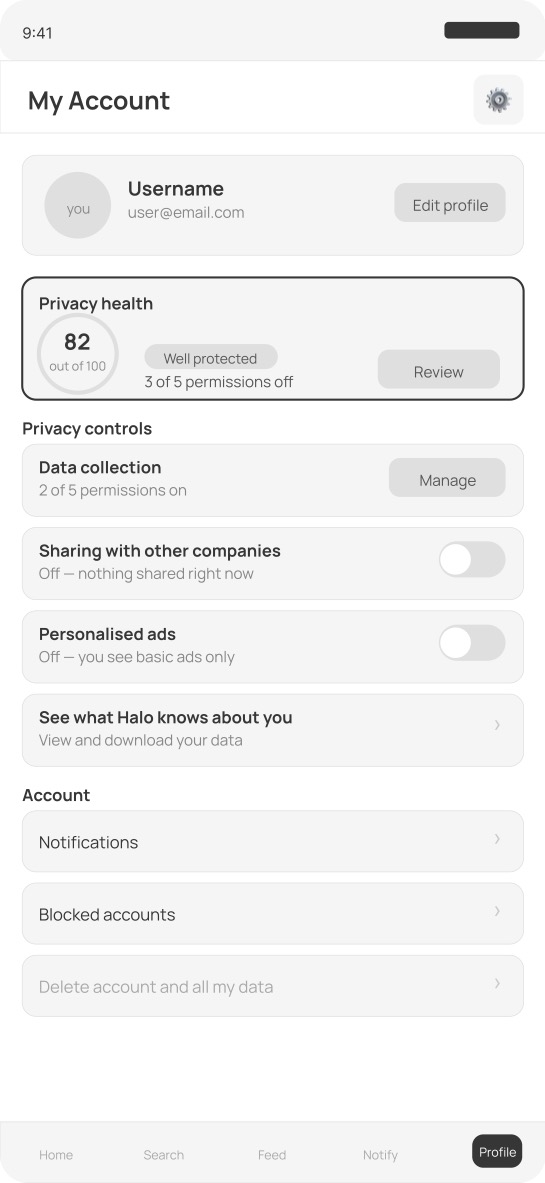

Screen 2 — Privacy settings on the account screen A privacy health score is visible directly on the account screen, not buried in settings. All privacy controls are in one place. Third party sharing and personalised ads are off by default. A data transparency link gives users direct access to everything Halo holds about them.

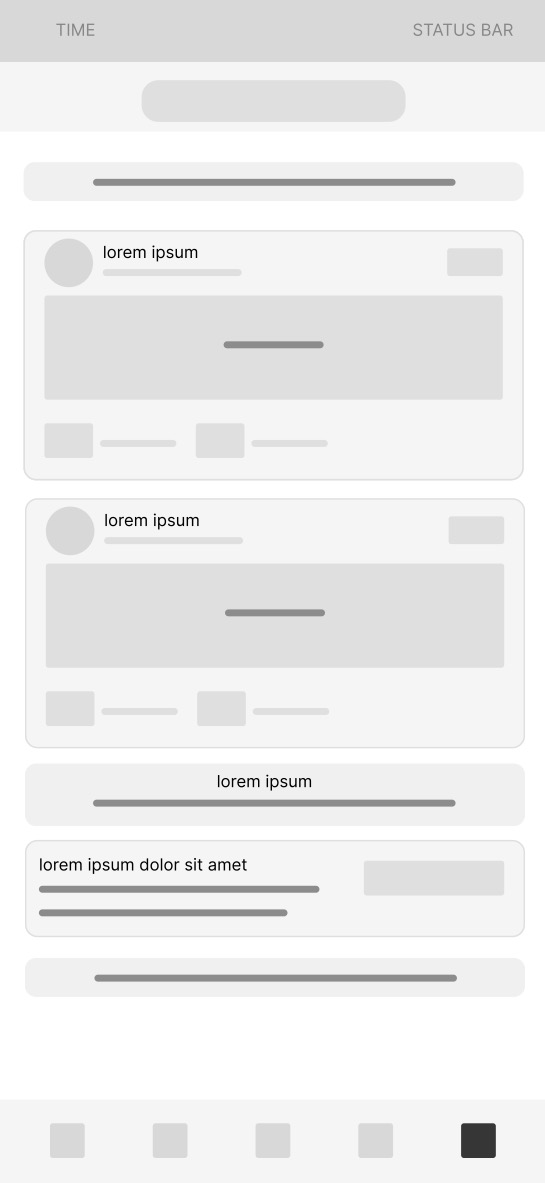

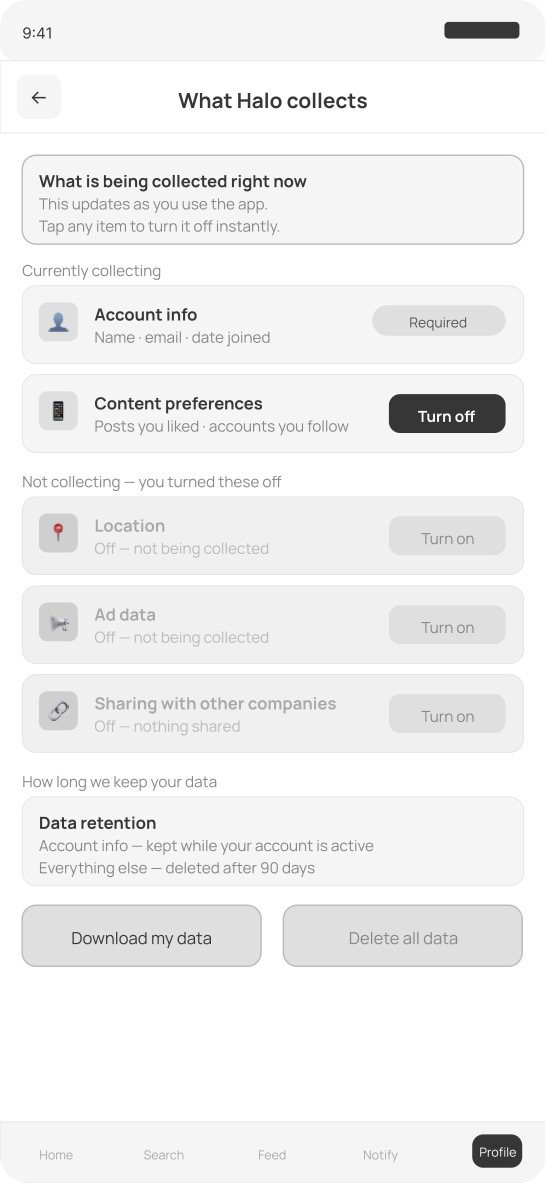

Screen 3 — Real time data transparency panel What Halo is collecting is shown in real time, separated clearly from what the user has turned off. Active items have a revoke button. Inactive items have a turn on option. Data retention policy is stated in plain language. Download and delete data are both accessible from one screen.

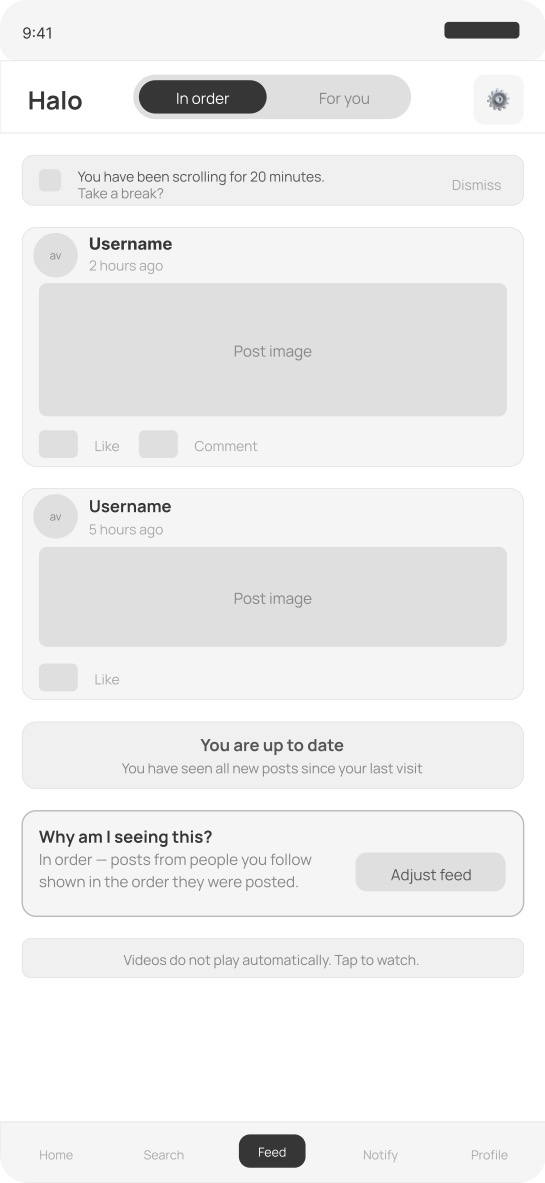

Screen 4 — Feed with algorithm controls Chronological feed is the default. There is no autoplay. A natural stopping point appears when the user has seen all new posts. An algorithm transparency panel explains why posts appear. A wellbeing nudge appears after twenty minutes of continuous scrolling.

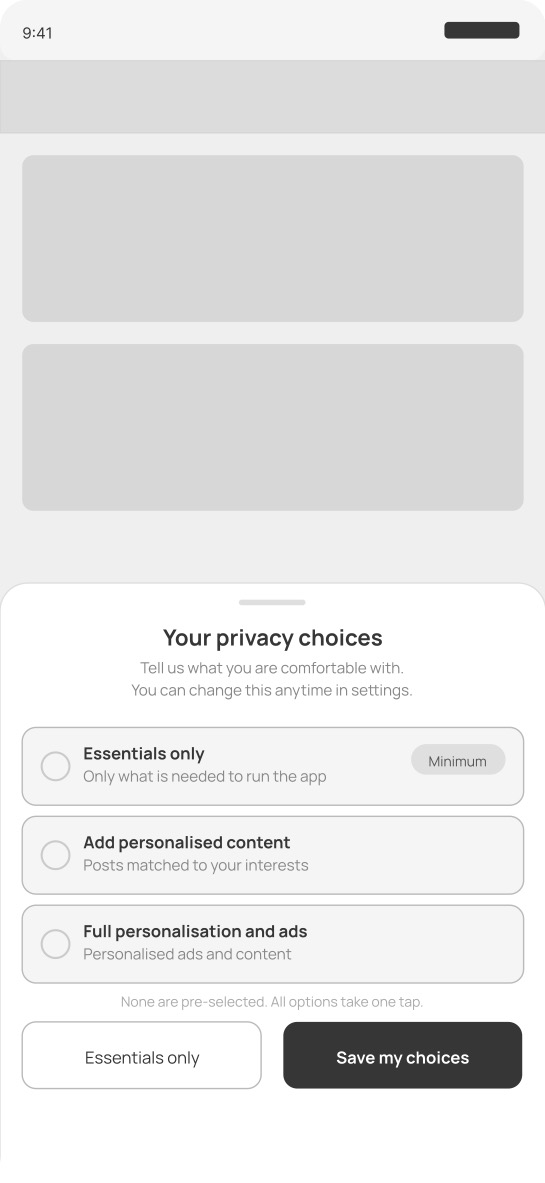

Screen 5 — Cookie and tracking consent banner The consent banner is a bottom sheet with three clearly explained options. None are pre selected. Accept and decline buttons have equal visual weight and equal number of taps. There are no consent walls blocking app access.

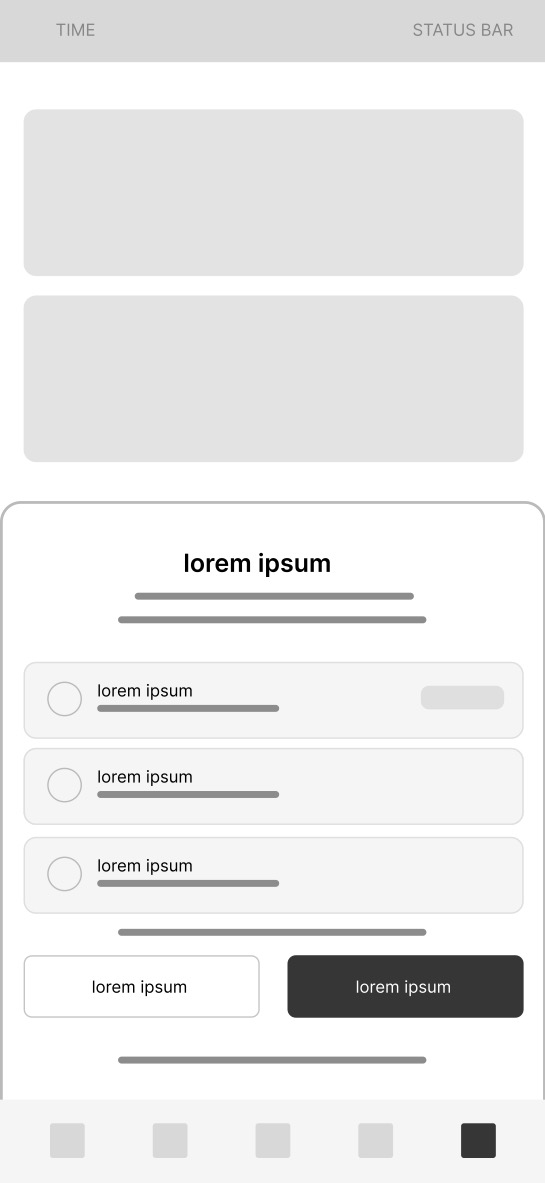

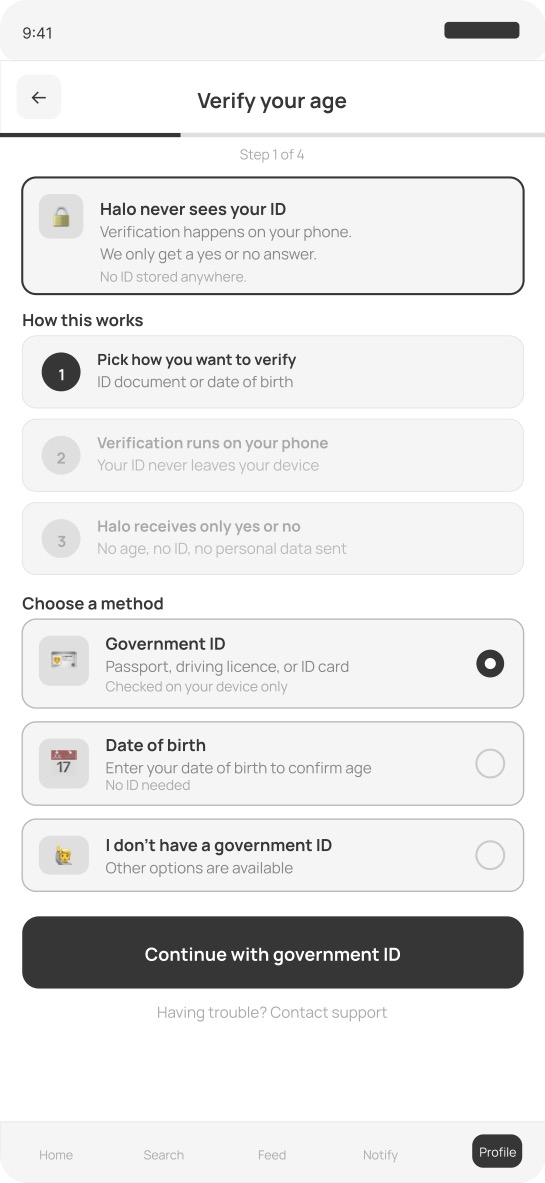

Screen 6 — Age verification flow A prominent privacy promise card appears before any verification begins. Halo never sees the ID. Verification happens on device only. Halo receives only a yes or no result. Three verification method options are available including one for users without government ID.

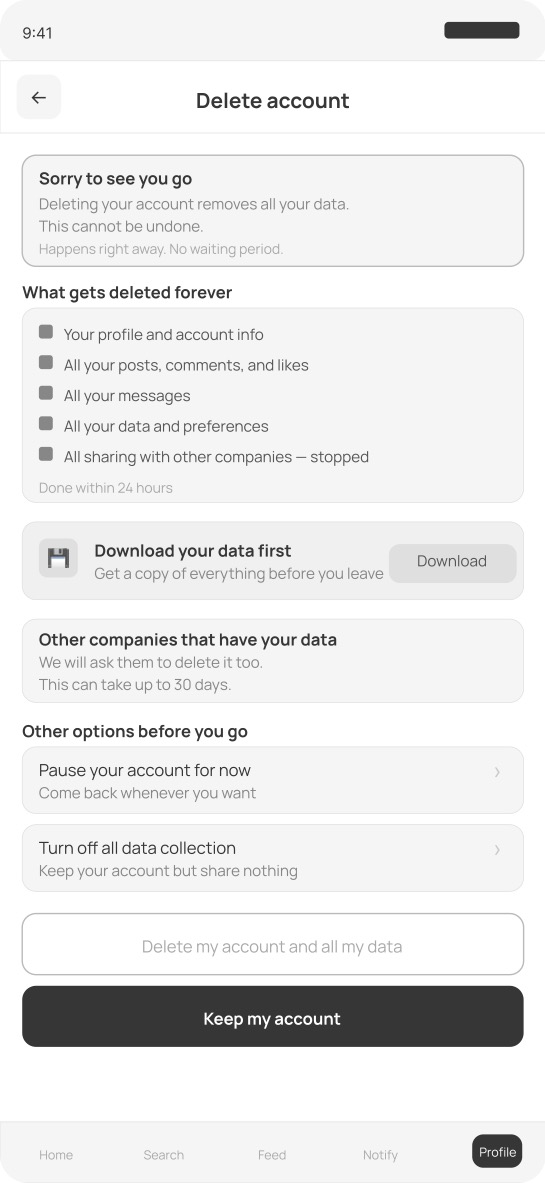

Screen 7 — Account deletion and data removal Delete account is easy to find. A complete list of what gets permanently deleted is shown before the user commits. A download your data option is available before deletion. Third party data deletion is disclosed with a timeline. Two alternatives are offered before the final confirmation. The delete button is less prominent than keep my account but this is honest UX, not a dark pattern in reverse.

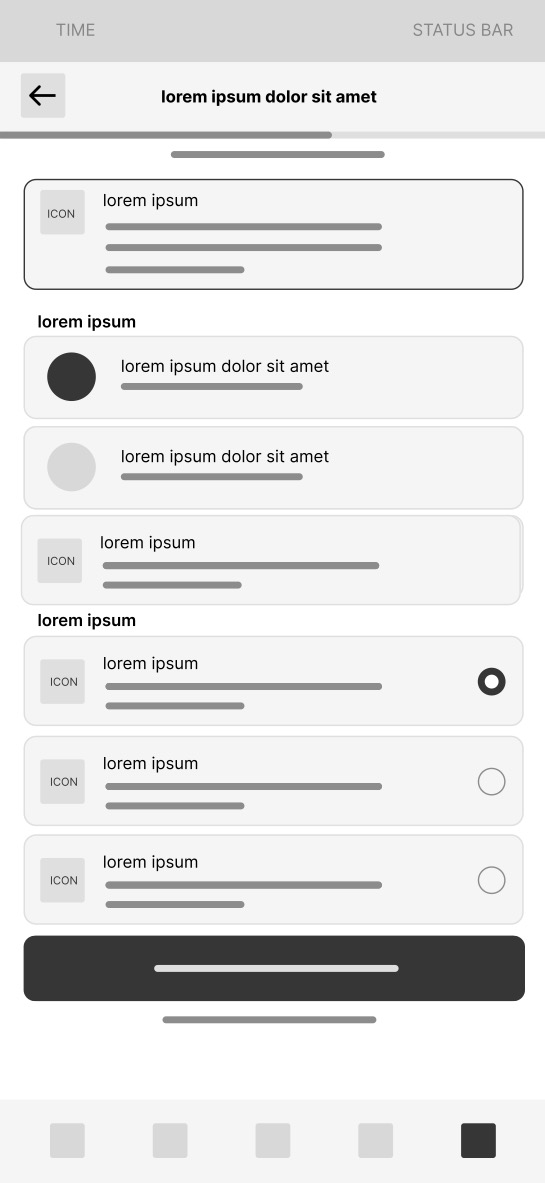

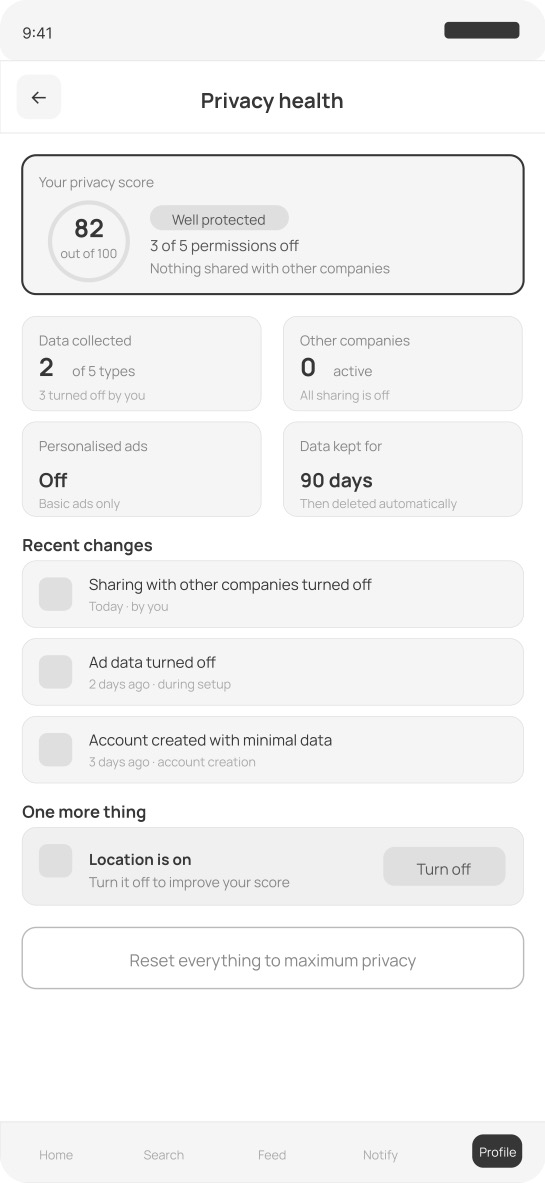

Screen 8 — Privacy health dashboard An overall privacy score with four metric cards shows data collected, third parties active, ad personalisation status, and data retention period at a glance. A recent privacy activity timeline shows every change made and when. A single suggestion for improving the score appears if relevant. A reset to maximum privacy button is always available.

Lo-Fi Wireframes

Mid-Fi Wireframes

Prototype

Best viewed on desktop. On mobile you can pinch to zoom inside the prototype.

Outcome & Reflection

Halo demonstrates that privacy first design is not a technical constraint. It is a set of design decisions that platforms have consistently made in the wrong direction because data collection drives revenue and genuine consent reduces it. The consent theater and accountability laundering frameworks I developed during research name that dynamic explicitly and provide a vocabulary for evaluating any platform's privacy design against its stated values.

The most challenging design problem in Halo was the age verification screen. Every existing approach to age verification trades one harm for another. Collecting government IDs creates a surveillance database. Self reported dates of birth are unverifiable. The solution in Halo uses on device verification that sends only a yes or no result, which sidesteps the data retention problem entirely. This is technically achievable today and simply has not been prioritized by platforms with no regulatory incentive to do so.

If I were to continue developing Halo I would conduct usability testing specifically on the onboarding consent flow to measure how many users engage with individual permissions versus accepting all defaults, and use Microsoft Clarity to track engagement with the privacy health dashboard over time.